The Shocking Truth in 2026: How Much Energy AI Consumes Per Query

Table of Contents

- Understanding How Much Energy AI Consumes Per Query

- AI Energy Consumption Per Query vs Traditional Search

- Why AI Uses More Electricity Than You Expect

- Real-World Example: AI Usage in US Small Businesses

- Global Impact of AI Energy Consumption

- Environmental Cost of AI Queries

- AI vs Search Engine Energy Comparison in Detail

- How Much Power Does AI Use at Scale

- Internal Strategy: Why This Topic Matters for SEO

- Future of AI Energy Consumption

- Conclusion: My Perspective as a Digital Marketer

- FAQ: Detailed Answers for AI and Search Optimization

When I first started using AI tools for content, automation, and digital marketing experiments, I was only focused on speed, efficiency, and output quality. Like most business owners and marketers in the United States, I saw AI as a growth tool — something that helps reduce workload, cut costs, and improve customer experience. But over time, I started asking a deeper question that most people ignore: how much energy AI consumes per query, and what that actually means at scale.

The answer is not as simple as it looks. Behind every single AI query, there is a complex infrastructure running in the background — massive data centers, high-performance GPUs, continuous cooling systems, and global electricity consumption that most users never think about. And once I started connecting the dots between AI energy consumption per query and the environmental cost of AI queries, I realized this is not just a technical topic — this is a business, sustainability, and future-of-internet issue.

If you want a broader understanding of the environmental impact of AI, I highly recommend reading this detailed breakdown: how AI is bad for the environment. That article explains the bigger picture, while this one goes deep into how much power AI uses per query and how it compares to traditional systems like search engines.

Understanding How Much Energy AI Consumes Per Query

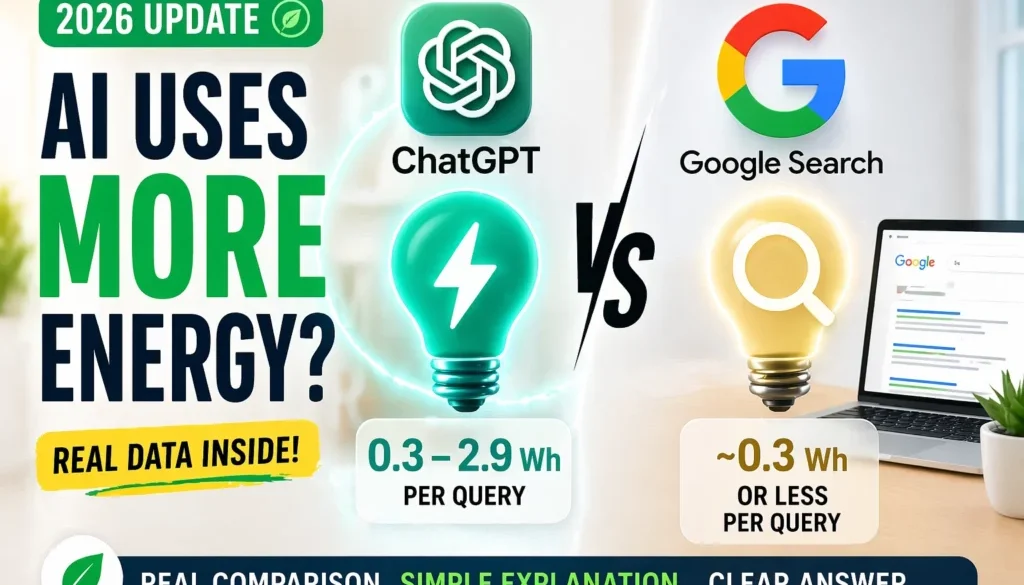

Let me break this down in the simplest way possible. When we talk about how much energy AI consumes per query, we are essentially asking: how much electricity is required for one interaction with an AI system. That includes everything from processing your input, running it through trained models, generating a response, and sending it back to your device. According to multiple research estimates, a single AI query can consume anywhere between 0.3 watt-hours to 2.9 watt-hours of electricity, depending on the complexity of the task, the length of the response, and the infrastructure being used.

This may sound small, but when multiplied by millions or billions of queries per day, the numbers become extremely significant. To give a real perspective, tools like :contentReference[oaicite:0]{index=0} are used by millions of users daily, and each interaction contributes to global energy consumption. This is where the concept of AI carbon footprint per query becomes important, because it helps us understand the environmental cost of each interaction.

AI Energy Consumption Per Query vs Traditional Search

ChatGPT vs Google: Which Uses More Energy Per Query?

When I started digging into how much energy AI consumes per query, one comparison kept coming up again and again — ChatGPT vs Google Search. At first, I assumed both would be similar, since both answer questions. But the reality is completely different once you look at how each system works behind the scenes. Tools like :contentReference[oaicite:0]{index=0} generate responses in real time using large language models, while :contentReference[oaicite:1]{index=1} retrieves already indexed results. That single difference changes everything when it comes to energy consumption.

Real Energy Data Comparison

Let’s break this down using actual numbers from research and industry estimates:

- ChatGPT query energy usage: ~0.3 to 2.9 watt-hours per query (Source: Epoch AI)

- Google Search energy usage: ~0.0003 kWh (~0.3 watt-hours or less) (Source: Google Environmental Report)

- AI queries can use 10x to 100x more energy depending on complexity (Source: United Nations (UNRIC))

Comparison Table: AI vs Search Engine Energy Usage

| Factor | ChatGPT (AI) | Google Search |

|---|---|---|

| Energy per query | 0.3 – 2.9 Wh | ~0.3 Wh or less |

| Process type | Generates answers | Retrieves indexed links |

| Hardware usage | GPU-intensive | CPU-optimized |

| Computation level | High (real-time processing) | Low (data retrieval) |

Simple Explanation (Easy to Understand)

I like to explain it like this — when I use Google, I’m basically asking a librarian to find a book. But when I use AI, I’m asking a writer to create a brand-new answer instantly. And creating something from scratch always takes more effort and more energy than just finding something that already exists. That’s why when people ask how much energy AI consumes per query, the answer is always higher compared to traditional search engines. It’s not just about getting results — it’s about generating them.

Real-World Example

Let’s say a small business owner in California runs 100 queries per day:

- Using Google Search → minimal energy impact

- Using AI tools → significantly higher energy usage

Now multiply that across millions of users in the United States. 👉 That’s where the real difference between AI energy consumption per query and traditional search becomes visible. For a complete breakdown of how this impacts the environment at scale, you can read: how AI is bad for the environment One of the most common comparisons people make is between AI tools and search engines. Specifically, the debate around ChatGPT energy usage vs Google search has gained a lot of attention. And honestly, this comparison reveals a lot about how much power AI uses compared to traditional systems. When you use :contentReference[oaicite:1]{index=1}, the system is primarily retrieving already indexed information.

It does not generate new content in real time. This makes it significantly more efficient in terms of electricity used by AI models versus traditional search infrastructure. In contrast, AI systems generate responses from scratch using large language models. This requires significantly more computational power, often involving GPUs that consume hundreds or even thousands of watts during operation. This is why AI vs search engine energy comparison always shows AI as the more energy-intensive option.

Why AI Uses More Electricity Than You Expect

The reason AI energy consumption per query is so high comes down to how these systems are built. AI models are trained on massive datasets and consist of billions of parameters. Every time you ask a question, the model processes your input through multiple layers of computation before generating an answer.

This process is not lightweight. It involves parallel computing, high-speed memory usage, and continuous energy flow. When scaled across global users, the electricity used by AI models becomes a major factor in global energy demand. In the United States alone, data centers already consume a significant portion of total electricity, and with the rise of AI, this number is expected to increase. According to the International Energy Agency, data centers are becoming one of the fastest-growing sources of electricity demand worldwide.

Real-World Example: AI Usage in US Small Businesses

Let’s take a practical example. Imagine a small bakery in Texas operating as an LLC. The owner uses AI tools daily for marketing, customer communication, and content creation. From writing Instagram captions to generating email campaigns, each interaction with AI consumes energy. Now multiply that by thousands of similar businesses across different states — California, New York, Florida — each using AI to optimize their marketing budget and attract customers online. Suddenly, the environmental cost of AI queries becomes a nationwide concern. This is not about avoiding AI. It is about understanding how much energy AI consumes per query and using it responsibly.

Global Impact of AI Energy Consumption

Globally, AI is driving massive growth in data center infrastructure. According to reports from Nature, training large AI models alone can consume as much energy as hundreds of households use in a year. When you combine training and inference (daily queries), the numbers become even more significant. This is where AI carbon footprint per query becomes a critical metric. It helps researchers and policymakers understand the long-term sustainability of AI systems and their impact on climate change.

Environmental Cost of AI Queries

The environmental cost of AI queries goes beyond electricity. Data centers require cooling systems to prevent overheating, and these systems often use large amounts of water. According to ScienceDirect, water consumption in data centers is increasing rapidly due to AI workloads. This means every time you use AI, you are indirectly contributing to both energy consumption and water usage. While one query may not seem significant, the cumulative effect is what matters.

AI vs Search Engine Energy Comparison in Detail

Let’s go deeper into AI vs search engine energy comparison. A traditional search engine query may consume a fraction of the energy used by an AI query. This is because search engines rely on indexing and retrieval, while AI relies on generation. In simple terms, search engines find answers, while AI creates answers. Creation requires more computation, and more computation requires more power. This is why businesses that rely heavily on AI should start thinking about efficiency and optimization. Using AI wisely can help reduce unnecessary energy consumption while still benefiting from its capabilities.

How Much Power Does AI Use at Scale

When we scale this discussion, the question changes from how much energy AI consumes per query to how much power AI uses globally. And the answer is massive. According to Bloomberg, AI-related electricity demand is expected to grow significantly in the coming years, potentially reshaping global energy markets. This growth is driven by increasing adoption across industries, from healthcare to finance to marketing. Every industry is integrating AI, and each integration adds to the overall energy demand.

Internal Strategy: Why This Topic Matters for SEO

From an SEO perspective, targeting keywords like how much energy AI consumes per query, AI energy consumption per query, and environmental cost of AI queries allows you to build topical authority in a growing niche. You can also connect this article with other related content such as how US small businesses get customers online using tools, Google AI overview traffic strategies, and ranking in AI-driven search results. This creates a strong internal linking structure that improves both user experience and search rankings.

Future of AI Energy Consumption

The future of AI energy consumption depends on two factors: efficiency and demand. While companies are working on optimizing models and using renewable energy, the demand for AI is growing at a much faster rate. This means even if each query becomes more efficient, the total energy consumption may still increase. This is a classic example of the rebound effect, where efficiency improvements lead to higher overall usage.

Conclusion: My Perspective as a Digital Marketer

From my experience working in digital marketing and building platforms like Peplio, I see AI as a powerful tool. It helps businesses scale, automate processes, and improve customer engagement. But at the same time, understanding how much energy AI consumes per query gives us a more responsible perspective. We don’t need to stop using AI. We just need to use it smarter. Focus on meaningful queries, avoid unnecessary usage, and stay aware of the environmental cost of AI queries. Because at the end of the day, technology should not only drive growth — it should also support sustainability.

FAQ: Detailed Answers for AI and Search Optimization

How much energy AI consumes per query in real terms?

In real-world scenarios, how much energy AI consumes per query depends on multiple factors including model size, infrastructure, and query complexity. On average, it ranges between 0.3 to 2.9 watt-hours. However, enterprise-level AI systems and longer queries can increase this consumption significantly. When multiplied across millions of users, this becomes a major contributor to global energy usage.

Is AI energy consumption per query higher than traditional systems?

Yes, AI energy consumption per query is significantly higher than traditional systems like search engines. This is because AI generates responses instead of retrieving them, requiring more computational power and electricity.

What is the environmental cost of AI queries?

The environmental cost of AI queries includes electricity consumption, carbon emissions, and water usage for cooling systems. Each query contributes a small amount, but at scale, the impact becomes substantial.

How does ChatGPT energy usage vs Google search compare?

ChatGPT energy usage vs Google search shows a clear difference. AI systems require more energy due to real-time content generation, while search engines rely on efficient indexing and retrieval processes.

How can businesses reduce AI energy usage?

Businesses can reduce AI energy usage by optimizing workflows, avoiding redundant queries, and using AI strategically. This not only reduces costs but also minimizes environmental impact.